Fairbanks and 42on at the OpenInfra Summit Berlin

The OpenInfra Summit is a global event for open source IT infrastructure professionals to collaborate on software development, share best practices about designing and running infrastructure in production, and make partnership and purchase decisions. This year (June the 7th, 8th and 9th) it is held in Berlin and brings together more than 2000 influential IT […]

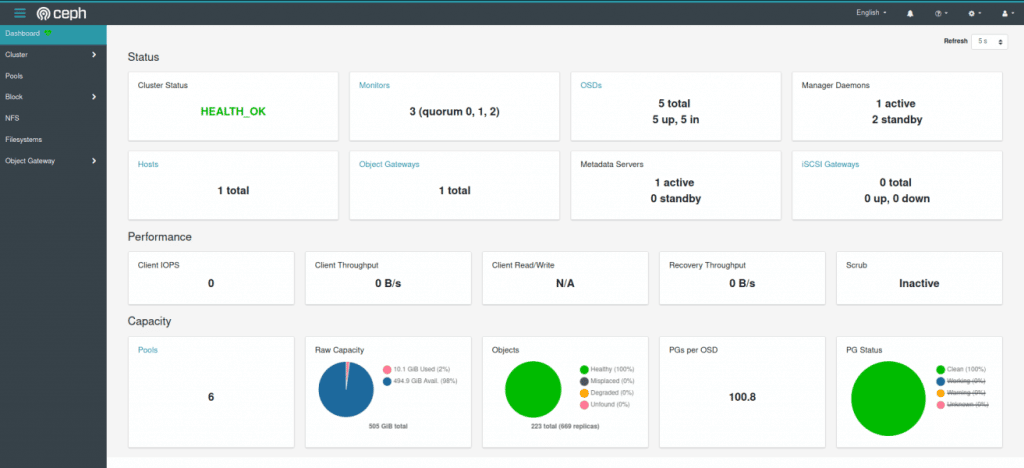

Deploying a Ceph cluster with cephadm

Cephadm deploys and manages a Ceph cluster. It does this by connecting the manager daemon to hosts via SSH. The manager daemon is able to add, remove, and update Ceph containers. In this blog, I will provide you with a little more information about deploying a Ceph cluster using cephadm. Cephadm manages the full lifecycle of a […]

About RBD, Rados Block Device

For anybody enjoying Latin pop in the 2000s, RBD was a popular Mexican pop band from Mexico City labeled by EMI Virgin. The group achieved international success from 2004 until their separation in 2009 and sold over 15 million records worldwide, making them one of the best-selling Latin music artists of all time. The group […]

Managing containerized Ceph clusters with cephadm

Cephadm is a great new feature of Ceph v15.2.0 (Octopus). By using cephadm, users have more freedom and flexibility to think about the future migration paths. But what is cephadm it exactly? Well, the goal of cephadm is to provide a fully-featured, robust, and well-maintained installation method. It deploys Ceph in containers while not depending […]

A huge thanks to Sage Weil

Ceph was developed by Sage Weil in 2003 as a part of his Ph.D. project. It was open sourced in 2006 to serve as a reference implementation and research platform. DreamHost, a Los Angeles-based web hosting and domain registrar company also co-founded by Sage Weil, supported Ceph development from 2007 to 2011. During this period Ceph as we know it took shape: the […]

The importancy of upgrading and updating Ceph consistently

During our Ceph consultancy projects, we often get questions about Ceph versions. A question we get a lot is about what Ceph version should be used, do I use the latest or an earlier version? Another question is about running different Ceph versions on one cluster, is this a bad thing? I thought it might […]

Best practices for cephadm and expanding your Ceph infrastructure

If you want to expand your Ceph cluster by adding a new one, it is good to know what the best practices are. So, here is some more information about cephadm and some tips and tricks for expanding your Ceph cluster. First a little bit about cephadm. Cephadm creates a new Ceph cluster by “bootstrapping” […]

What about our Ceph Fundamentals training?

Currently, we are planning a new Ceph Fundamentals training course for November or December. Because of this, we thought it would be nice to share some more information on the topics of this training course. The 42on Ceph Fundamentals Training is a 2-day online, instructor-led training course. The training is led by one of our […]

Improving some aspects on your Ceph cluster

As performance optimization research might cost too much time and effort, here are some steps you might first want to try to improve your overall Ceph cluster. The first step is to deploy Ceph on newer releases of Linux and deploy on releases with long-term support. Secondly, update your hardware design and service placement. As […]

Best practices for CephFS

File storage is a data storage format in which data is stored and managed as files within folders. Why would you choose to use file storage? Well, the advantages of file storage include the following: 1. User-friendly interface: A simple file management and sharing system which are easy to understand for human users which makes […]